How To Draw Bounding Boxes To Classify Man, Woman In Images

How to Perform Face Detection with Deep Learning

Last Updated on Baronial 24, 2020

Face detection is a computer vision problem that involves finding faces in photos.

It is a trivial problem for humans to solve and has been solved reasonably well by classical characteristic-based techniques, such as the cascade classifier. More recently deep learning methods take accomplished state-of-the-art results on standard benchmark face detection datasets. One example is the Multi-task Pour Convolutional Neural Network, or MTCNN for brusque.

In this tutorial, yous volition discover how to perform face up detection in Python using classical and deep learning models.

Later on completing this tutorial, you will know:

- Face detection is a non-petty computer vision problem for identifying and localizing faces in images.

- Face detection can be performed using the classical feature-based cascade classifier using the OpenCV library.

- State-of-the-art face detection tin be accomplished using a Multi-task Cascade CNN via the MTCNN library.

Kicking-get-go your project with my new volume Deep Learning for Calculator Vision, including step-by-step tutorials and the Python source code files for all examples.

Permit's get started.

- Update Nov/2019: Updated for TensorFlow v2.0 and MTCNN v0.one.0.

How to Perform Face Detection With Classical and Deep Learning Methods

Photo past Miguel Discart, some rights reserved.

Tutorial Overview

This tutorial is divided into 4 parts; they are:

- Face Detection

- Test Photographs

- Face Detection With OpenCV

- Face Detection With Deep Learning

Face Detection

Face detection is a problem in calculator vision of locating and localizing one or more faces in a photograph.

Locating a face up in a photo refers to finding the coordinate of the face in the image, whereas localization refers to demarcating the extent of the face, ofttimes via a bounding box around the face.

A general argument of the trouble can exist defined as follows: Given a all the same or video image, discover and localize an unknown number (if any) of faces

— Face Detection: A Survey, 2001.

Detecting faces in a photograph is easily solved past humans, although has historically been challenging for computers given the dynamic nature of faces. For example, faces must be detected regardless of orientation or bending they are facing, low-cal levels, article of clothing, accessories, hair color, facial hair, makeup, age, and then on.

The human face is a dynamic object and has a loftier degree of variability in its appearance, which makes face detection a difficult problem in computer vision.

— Face up Detection: A Survey, 2001.

Given a photograph, a face detection system will output zero or more bounding boxes that incorporate faces. Detected faces tin can then be provided as input to a subsequent organization, such as a face recognition system.

Face detection is a necessary first-pace in face recognition systems, with the purpose of localizing and extracting the face region from the background.

— Confront Detection: A Survey, 2001.

There are perhaps 2 main approaches to face recognition: feature-based methods that utilise hand-crafted filters to search for and detect faces, and image-based methods that acquire holistically how to extract faces from the entire image.

Want Results with Deep Learning for Calculator Vision?

Take my free seven-day electronic mail crash grade at present (with sample code).

Click to sign-upwards and also get a free PDF Ebook version of the course.

Examination Photographs

We demand test images for face detection in this tutorial.

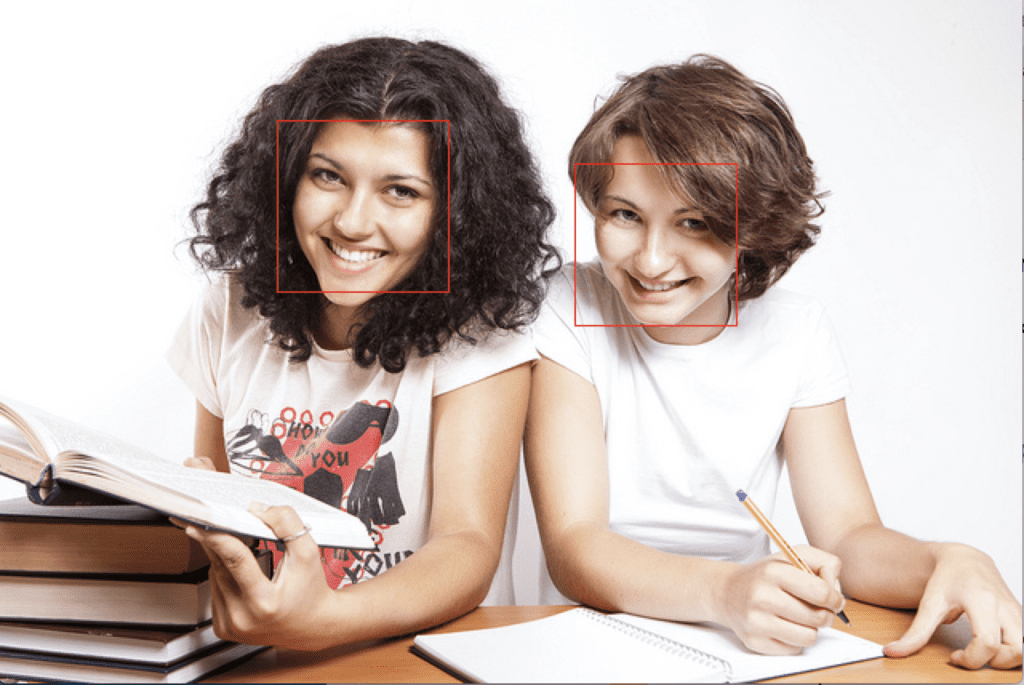

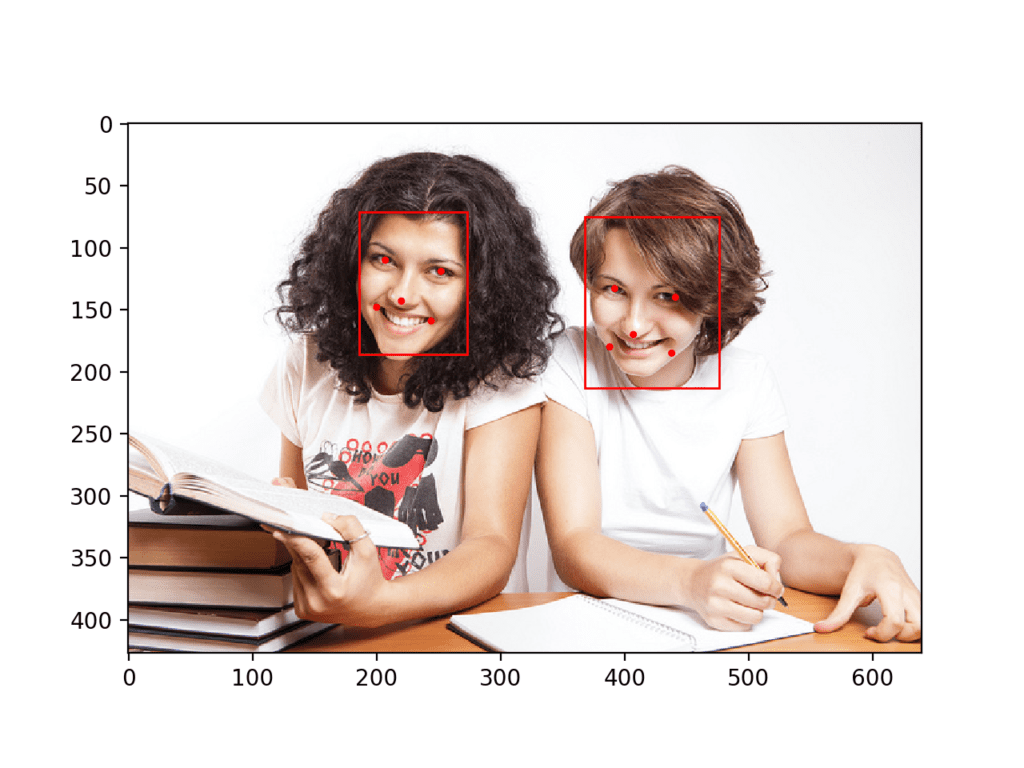

To keep things unproblematic, nosotros will use two test images: one with two faces, and one with many faces. We're non trying to push the limits of face detection, but demonstrate how to perform face detection with normal front-on photographs of people.

The outset paradigm is a photograph of ii college students taken by CollegeDegrees360 and made bachelor under a permissive license.

Download the image and place it in your current working directory with the filename 'test1.jpg'.

Higher Students (test1.jpg)

Photo by CollegeDegrees360, some rights reserved.

- College Students (test1.jpg)

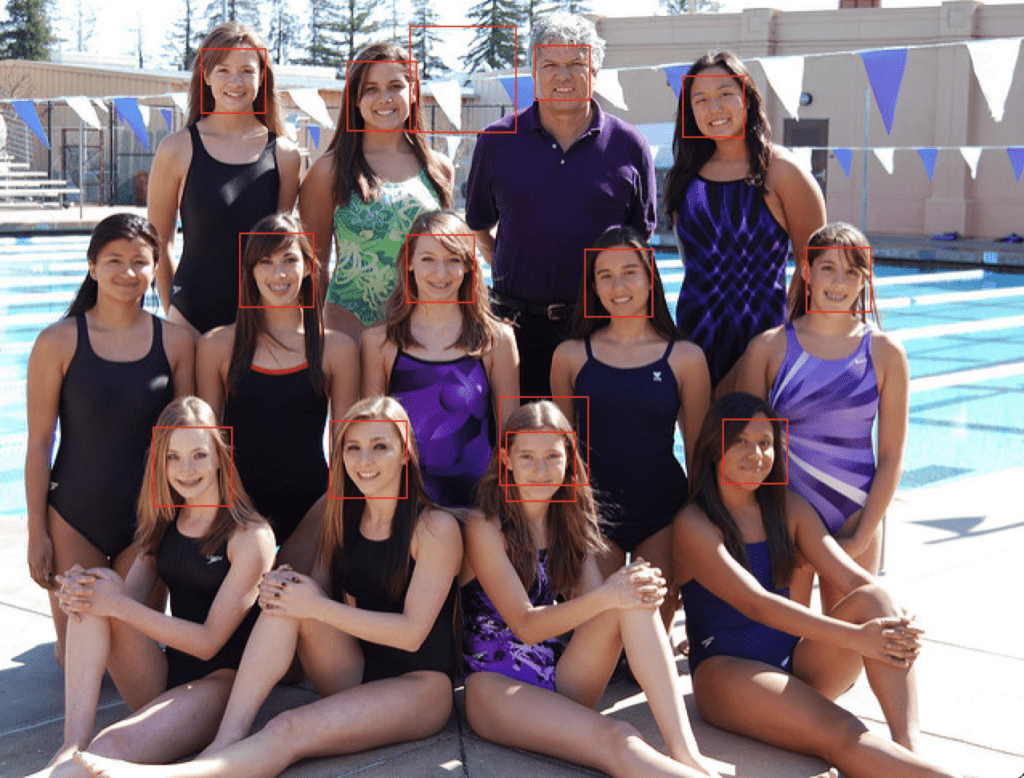

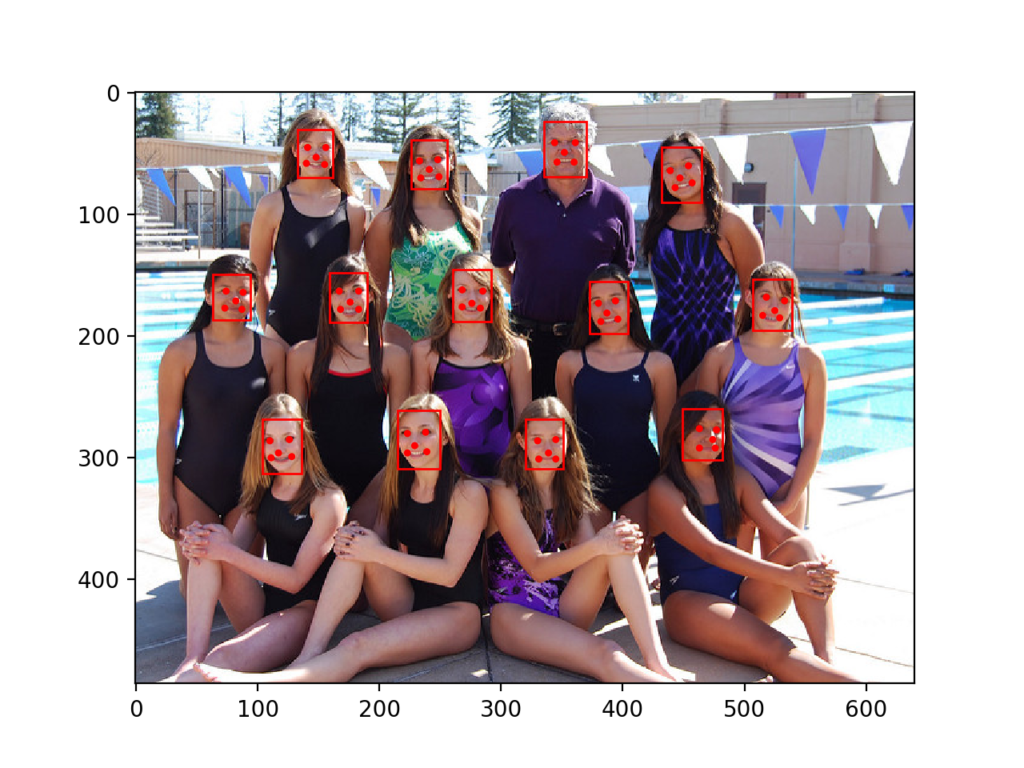

The second epitome is a photograph of a number of people on a swim squad taken by Bob n Renee and released under a permissive license.

Download the image and place it in your electric current working directory with the filename 'test2.jpg'.

Swim Team (test2.jpg)

Photo by Bob north Renee, some rights reserved.

- Swim Team (test2.jpg)

Face Detection With OpenCV

Feature-based confront detection algorithms are fast and constructive and have been used successfully for decades.

Perhaps the most successful example is a technique chosen cascade classifiers first described by Paul Viola and Michael Jones and their 2001 paper titled "Rapid Object Detection using a Boosted Cascade of Simple Features."

In the paper, effective features are learned using the AdaBoost algorithm, although chiefly, multiple models are organized into a hierarchy or "cascade."

In the paper, the AdaBoost model is used to learn a range of very elementary or weak features in each confront, that together provide a robust classifier.

… feature option is accomplished through a simple modification of the AdaBoost process: the weak learner is constrained so that each weak classifier returned can depend on only a unmarried feature . Every bit a issue each stage of the boosting procedure, which selects a new weak classifier, tin can be viewed as a characteristic selection procedure.

— Rapid Object Detection using a Boosted Pour of Simple Features, 2001.

The models are and so organized into a hierarchy of increasing complexity, called a "cascade".

Simpler classifiers operate on candidate face up regions directly, acting similar a coarse filter, whereas complex classifiers operate simply on those candidate regions that testify the almost promise as faces.

… a method for combining successively more than complex classifiers in a cascade structure which dramatically increases the speed of the detector by focusing attention on promising regions of the paradigm.

— Rapid Object Detection using a Boosted Cascade of Uncomplicated Features, 2001.

The result is a very fast and effective face detection algorithm that has been the basis for face detection in consumer products, such as cameras.

Their detector, called detector pour, consists of a sequence of unproblematic-to-complex confront classifiers and has attracted all-encompassing research efforts. Moreover, detector cascade has been deployed in many commercial products such equally smartphones and digital cameras.

— Multi-view Face Detection Using Deep Convolutional Neural Networks, 2015.

It is a modestly complex classifier that has likewise been tweaked and refined over the last about 20 years.

A modern implementation of the Classifier Cascade face detection algorithm is provided in the OpenCV library. This is a C++ reckoner vision library that provides a python interface. The benefit of this implementation is that it provides pre-trained face up detection models, and provides an interface to train a model on your ain dataset.

OpenCV tin can be installed past the packet manager organisation on your platform, or via pip; for example:

| sudo pip install opencv-python |

Once the installation process is consummate, it is important to confirm that the library was installed correctly.

This tin can be achieved by importing the library and checking the version number; for instance:

| # check opencv version import cv2 # print version number impress ( cv2 . __version__ ) |

Running the example will import the library and print the version. In this case, we are using version 4 of the library.

OpenCV provides the CascadeClassifier form that can be used to create a cascade classifier for confront detection. The constructor can take a filename as an argument that specifies the XML file for a pre-trained model.

OpenCV provides a number of pre-trained models as function of the installation. These are available on your organisation and are also available on the OpenCV GitHub project.

Download a pre-trained model for frontal face detection from the OpenCV GitHub project and identify it in your current working directory with the filename 'haarcascade_frontalface_default.xml'.

- Download Open up Frontal Face Detection Model (haarcascade_frontalface_default.xml)

In one case downloaded, nosotros can load the model equally follows:

| # load the pre-trained model classifier = CascadeClassifier ( 'haarcascade_frontalface_default.xml' ) |

One time loaded, the model can be used to perform face detection on a photograph past calling the detectMultiScale() function.

This function will return a list of bounding boxes for all faces detected in the photo.

| # perform face up detection bboxes = classifier . detectMultiScale ( pixels ) # print bounding box for each detected face for box in bboxes : print ( box ) |

Nosotros tin demonstrate this with an case with the college students photograph (exam.jpg).

The photo tin be loaded using OpenCV via the imread() role.

| # load the photo pixels = imread ( 'test1.jpg' ) |

The consummate case of performing face detection on the college students photograph with a pre-trained cascade classifier in OpenCV is listed below.

| # example of face detection with opencv cascade classifier from cv2 import imread from cv2 import CascadeClassifier # load the photograph pixels = imread ( 'test1.jpg' ) # load the pre-trained model classifier = CascadeClassifier ( 'haarcascade_frontalface_default.xml' ) # perform face detection bboxes = classifier . detectMultiScale ( pixels ) # impress bounding box for each detected face for box in bboxes : print ( box ) |

Running the example start loads the photo, and then loads and configures the cascade classifier; faces are detected and each bounding box is printed.

Each box lists the x and y coordinates for the lesser-left-hand-corner of the bounding box, as well as the width and the height. The results suggest that two bounding boxes were detected.

| [174 75 107 107] [360 102 101 101] |

Nosotros tin can update the example to plot the photograph and depict each bounding box.

This can be achieved past drawing a rectangle for each box straight over the pixels of the loaded image using the rectangle() function that takes two points.

| # extract x , y , width , height = box x2 , y2 = x + width , y + height # describe a rectangle over the pixels rectangle ( pixels , ( ten , y ) , ( x2 , y2 ) , ( 0 , 0 , 255 ) , 1 ) |

We can then plot the photograph and keep the window open until we printing a key to close information technology.

| # evidence the image imshow ( 'confront detection' , pixels ) # keep the window open until we printing a fundamental waitKey ( 0 ) # close the window destroyAllWindows ( ) |

The complete example is listed beneath.

| 1 two 3 iv 5 half dozen seven 8 nine 10 11 12 13 fourteen fifteen 16 17 xviii nineteen 20 21 22 23 24 25 26 | # plot photo with detected faces using opencv cascade classifier from cv2 import imread from cv2 import imshow from cv2 import waitKey from cv2 import destroyAllWindows from cv2 import CascadeClassifier from cv2 import rectangle # load the photograph pixels = imread ( 'test1.jpg' ) # load the pre-trained model classifier = CascadeClassifier ( 'haarcascade_frontalface_default.xml' ) # perform confront detection bboxes = classifier . detectMultiScale ( pixels ) # impress bounding box for each detected face for box in bboxes : # extract x , y , width , height = box x2 , y2 = x + width , y + superlative # draw a rectangle over the pixels rectangle ( pixels , ( 10 , y ) , ( x2 , y2 ) , ( 0 , 0 , 255 ) , 1 ) # show the image imshow ( 'confront detection' , pixels ) # keep the window open until nosotros press a key waitKey ( 0 ) # close the window destroyAllWindows ( ) |

Running the example, we tin can see that the photo was plotted correctly and that each face was correctly detected.

College Students Photograph With Faces Detected using OpenCV Cascade Classifier

We tin can try the same code on the second photograph of the swim team, specifically 'test2.jpg'.

| # load the photo pixels = imread ( 'test2.jpg' ) |

Running the case, we can see that many of the faces were detected correctly, simply the consequence is not perfect.

Note: Your results may vary given the stochastic nature of the algorithm or evaluation procedure, or differences in numerical precision. Consider running the instance a few times and compare the average consequence.

We can come across that a face on the showtime or lesser row of people was detected twice, that a face on the middle row of people was not detected, and that the background on the third or top row was detected as a face.

Swim Team Photograph With Faces Detected using OpenCV Cascade Classifier

The detectMultiScale() function provides some arguments to help melody the usage of the classifier. Two parameters of note are scaleFactor and minNeighbors; for example:

| # perform confront detection bboxes = classifier . detectMultiScale ( pixels , 1.one , 3 ) |

The scaleFactor controls how the input image is scaled prior to detection, e.thousand. is it scaled upwardly or down, which can help to better find the faces in the image. The default value is 1.1 (10% increase), although this can exist lowered to values such as 1.05 (5% increment) or raised to values such as 1.iv (forty% increase).

The minNeighbors determines how robust each detection must be in club to be reported, e.g. the number of candidate rectangles that establish the confront. The default is iii, but this can exist lowered to ane to detect a lot more faces and volition likely increment the imitation positives, or increment to six or more than to crave a lot more confidence before a face is detected.

The scaleFactor and minNeighbors often require tuning for a given paradigm or dataset in lodge to best detect the faces. It may be helpful to perform a sensitivity analysis across a filigree of values and see what works well or best in full general on one or multiple photographs.

A fast strategy may be to lower (or increase for small photos) the scaleFactor until all faces are detected, then increase the minNeighbors until all false positives disappear, or close to it.

With some tuning, I found that a scaleFactor of ane.05 successfully detected all of the faces, but the background detected as a face did non disappear until a minNeighbors of viii, after which three faces on the middle row were no longer detected.

| # perform confront detection bboxes = classifier . detectMultiScale ( pixels , ane.05 , 8 ) |

The results are not perfect, and perhaps better results can be achieved with farther tuning, and peradventure postal service-processing of the bounding boxes.

Swim Squad Photo With Faces Detected Using OpenCV Cascade Classifier After Some Tuning

Face Detection With Deep Learning

A number of deep learning methods have been adult and demonstrated for face detection.

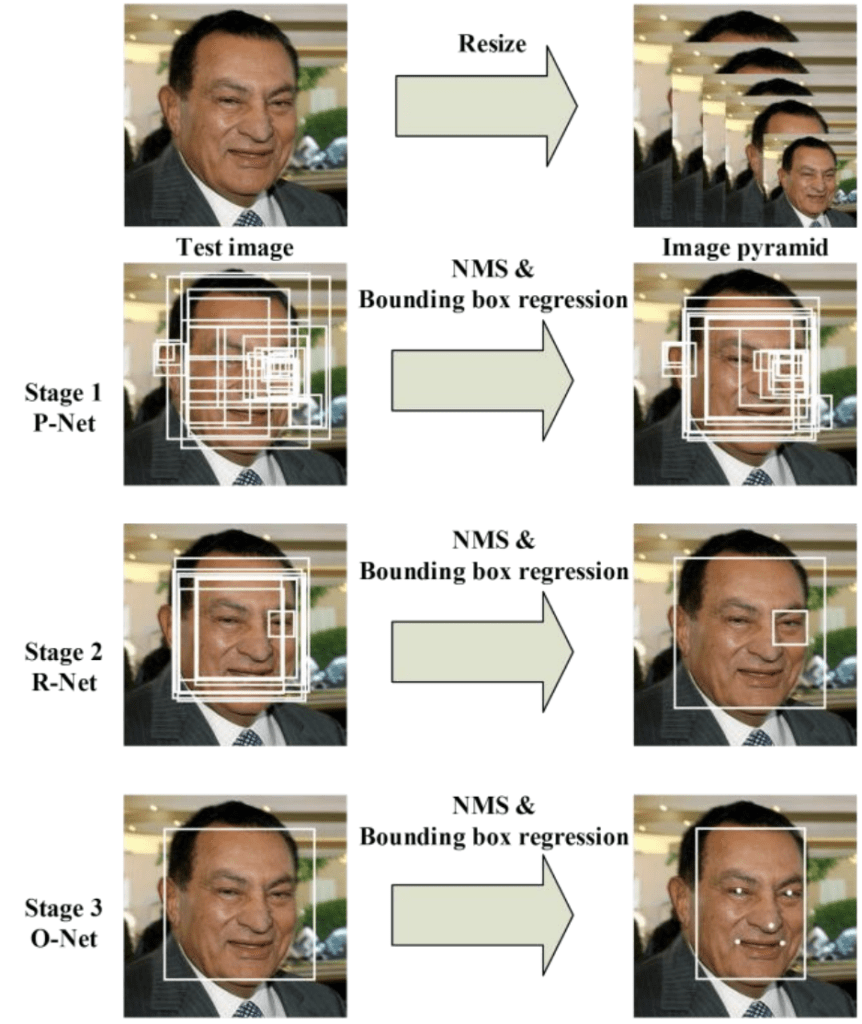

Possibly one of the more popular approaches is called the "Multi-Chore Cascaded Convolutional Neural Network," or MTCNN for short, described by Kaipeng Zhang, et al. in the 2016 paper titled "Articulation Face up Detection and Alignment Using Multitask Cascaded Convolutional Networks."

The MTCNN is popular because it accomplished then country-of-the-art results on a range of benchmark datasets, and considering it is capable of likewise recognizing other facial features such equally eyes and mouth, called landmark detection.

The network uses a pour structure with three networks; first the image is rescaled to a range of unlike sizes (chosen an paradigm pyramid), then the first model (Proposal Network or P-Net) proposes candidate facial regions, the 2d model (Refine Network or R-Cyberspace) filters the bounding boxes, and the tertiary model (Output Network or O-Net) proposes facial landmarks.

The proposed CNNs consist of iii stages. In the outset stage, it produces candidate windows speedily through a shallow CNN. Then, it refines the windows to reject a large number of non-faces windows through a more complex CNN. Finally, it uses a more powerful CNN to refine the result and output facial landmarks positions.

— Joint Face up Detection and Alignment Using Multitask Cascaded Convolutional Networks, 2016.

The paradigm below taken from the paper provides a helpful summary of the three stages from top-to-bottom and the output of each phase left-to-right.

Pipeline for the Multi-Task Cascaded Convolutional Neural NetworkTaken from: Joint Face Detection and Alignment Using Multitask Cascaded Convolutional Networks.

The model is called a multi-task network because each of the three models in the pour (P-Net, R-Net and O-Net) are trained on three tasks, e.g. brand 3 types of predictions; they are: face up classification, bounding box regression, and facial landmark localization.

The 3 models are non continued directly; instead, outputs of the previous stage are fed as input to the next stage. This allows additional processing to be performed between stages; for example, not-maximum suppression (NMS) is used to filter the candidate bounding boxes proposed by the first-stage P-Net prior to providing them to the second phase R-Cyberspace model.

The MTCNN compages is reasonably complex to implement. Thankfully, there are open source implementations of the compages that can be trained on new datasets, likewise every bit pre-trained models that can be used directly for face detection. Of annotation is the official release with the code and models used in the paper, with the implementation provided in the Caffe deep learning framework.

Perhaps the best-of-brood third-political party Python-based MTCNN project is called "MTCNN" by Iván de Paz Centeno, or ipazc, made available nether a permissive MIT open up source license. As a third-party open up-source project, it is subject area to change, therefore I take a fork of the projection at the fourth dimension of writing bachelor here.

The MTCNN projection, which nosotros will refer to as ipazc/MTCNN to differentiate it from the name of the network, provides an implementation of the MTCNN architecture using TensorFlow and OpenCV. There are two main benefits to this project; starting time, it provides a top-performing pre-trained model and the second is that information technology can exist installed equally a library set up for use in your own code.

The library tin can be installed via pip; for example:

Later on successful installation, yous should see a message like:

| Successfully installed mtcnn-0.1.0 |

You tin then confirm that the library was installed correctly via pip; for case:

You should see output like that listed below. In this example, you tin see that we are using version 0.0.viii of the library.

| Name: mtcnn Version: 0.1.0 Summary: Multi-job Cascaded Convolutional Neural Networks for Face Detection, based on TensorFlow Dwelling house-page: http://github.com/ipazc/mtcnn Writer: Iván de Paz Centeno License: MIT Location: ... Requires: opencv-python, keras Required-by: |

You can as well confirm that the library was installed correctly via Python, equally follows:

| # confirm mtcnn was installed correctly import mtcnn # impress version print ( mtcnn . __version__ ) |

Running the case will load the library, confirming it was installed correctly; and print the version.

Now that nosotros are confident that the library was installed correctly, we can employ information technology for face up detection.

An instance of the network can be created past calling the MTCNN() constructor.

Past default, the library will use the pre-trained model, although you tin can specify your ain model via the 'weights_file' argument and specify a path or URL, for example:

| model = MTCNN ( weights_file = 'filename.npy' ) |

The minimum box size for detecting a face up can exist specified via the 'min_face_size' argument, which defaults to 20 pixels. The constructor also provides a 'scale_factor' argument to specify the scale cistron for the input paradigm, which defaults to 0.709.

In one case the model is configured and loaded, information technology can exist used direct to detect faces in photographs by calling the detect_faces() function.

This returns a list of dict object, each providing a number of keys for the details of each face detected, including:

- 'box': Providing the ten, y of the bottom left of the bounding box, as well as the width and height of the box.

- 'confidence': The probability confidence of the prediction.

- 'keypoints': Providing a dict with dots for the 'left_eye', 'right_eye', 'olfactory organ', 'mouth_left', and 'mouth_right'.

For example, we can perform confront detection on the college students photo every bit follows:

| # face detection with mtcnn on a photograph from matplotlib import pyplot from mtcnn . mtcnn import MTCNN # load image from file filename = 'test1.jpg' pixels = pyplot . imread ( filename ) # create the detector, using default weights detector = MTCNN ( ) # detect faces in the image faces = detector . detect_faces ( pixels ) for face in faces : impress ( face ) |

Running the example loads the photograph, loads the model, performs face detection, and prints a list of each face detected.

| {'box': [186, 71, 87, 115], 'conviction': 0.9994562268257141, 'keypoints': {'left_eye': (207, 110), 'right_eye': (252, 119), 'nose': (220, 143), 'mouth_left': (200, 148), 'mouth_right': (244, 159)}} {'box': [368, 75, 108, 138], 'conviction': 0.998593270778656, 'keypoints': {'left_eye': (392, 133), 'right_eye': (441, 140), 'olfactory organ': (407, 170), 'mouth_left': (388, 180), 'mouth_right': (438, 185)}} |

We tin depict the boxes on the prototype by first plotting the paradigm with matplotlib, then creating a Rectangle object using the 10, y and width and meridian of a given bounding box; for instance:

| # get coordinates x , y , width , height = issue [ 'box' ] # create the shape rect = Rectangle ( ( 10 , y ) , width , height , fill = False , colour = 'red' ) |

Below is a office named draw_image_with_boxes() that shows the photograph and then draws a box for each bounding box detected.

| 1 ii 3 4 5 6 7 8 9 ten eleven 12 13 xiv 15 16 17 eighteen | # describe an image with detected objects def draw_image_with_boxes ( filename , result_list ) : # load the image data = pyplot . imread ( filename ) # plot the paradigm pyplot . imshow ( data ) # get the context for cartoon boxes ax = pyplot . gca ( ) # plot each box for result in result_list : # get coordinates x , y , width , height = result [ 'box' ] # create the shape rect = Rectangle ( ( x , y ) , width , height , fill = False , color = 'scarlet' ) # draw the box ax . add_patch ( rect ) # show the plot pyplot . show ( ) |

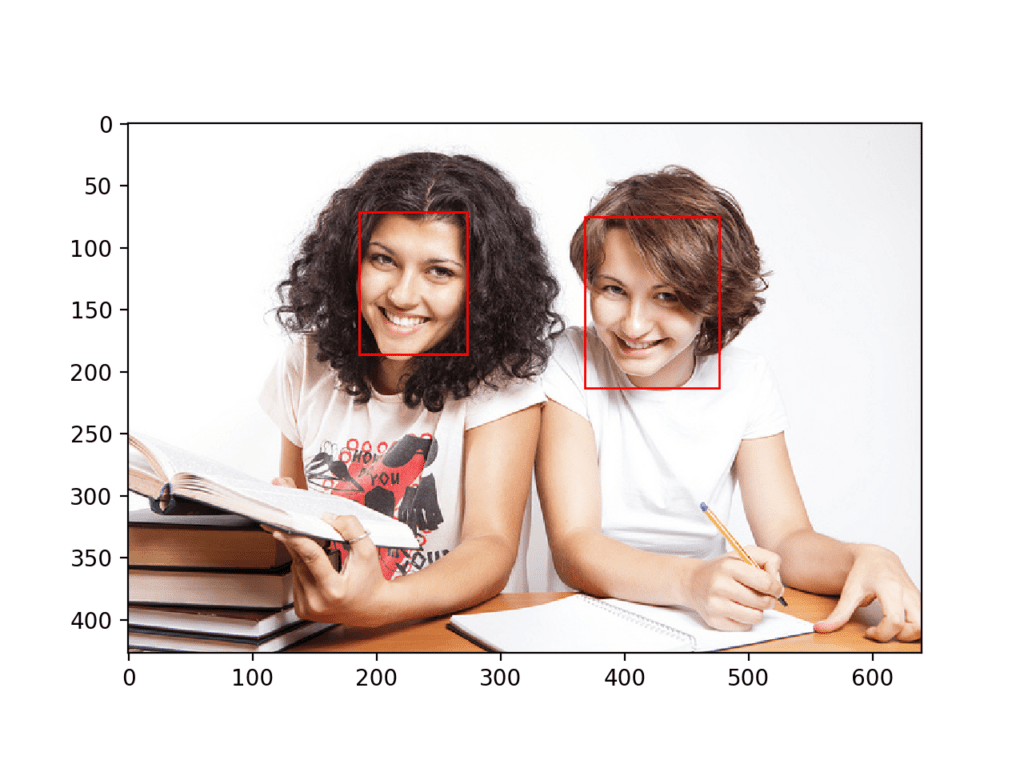

The complete example making use of this office is listed below.

| 1 2 three 4 five vi 7 8 9 10 eleven 12 13 14 fifteen 16 17 18 nineteen xx 21 22 23 24 25 26 27 28 29 30 31 32 33 | # face detection with mtcnn on a photo from matplotlib import pyplot from matplotlib . patches import Rectangle from mtcnn . mtcnn import MTCNN # draw an image with detected objects def draw_image_with_boxes ( filename , result_list ) : # load the epitome data = pyplot . imread ( filename ) # plot the image pyplot . imshow ( data ) # get the context for drawing boxes ax = pyplot . gca ( ) # plot each box for outcome in result_list : # get coordinates x , y , width , height = result [ 'box' ] # create the shape rect = Rectangle ( ( x , y ) , width , height , fill = False , color = 'carmine' ) # depict the box ax . add_patch ( rect ) # evidence the plot pyplot . show ( ) filename = 'test1.jpg' # load paradigm from file pixels = pyplot . imread ( filename ) # create the detector, using default weights detector = MTCNN ( ) # detect faces in the image faces = detector . detect_faces ( pixels ) # display faces on the original image draw_image_with_boxes ( filename , faces ) |

Running the example plots the photograph and then draws a bounding box for each of the detected faces.

We can meet that both faces were detected correctly.

Higher Students Photograph With Bounding Boxes Drawn for Each Detected Face Using MTCNN

Nosotros tin draw a circumvolve via the Circle course for the optics, olfactory organ, and mouth; for example

| # draw the dots for central , value in result [ 'keypoints' ] . items ( ) : # create and draw dot dot = Circumvolve ( value , radius = 2 , color = 'red' ) ax . add_patch ( dot ) |

The consummate instance with this addition to the draw_image_with_boxes() function is listed below.

| 1 2 iii 4 five 6 7 eight 9 10 11 12 13 xiv 15 sixteen 17 18 19 twenty 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 | # face up detection with mtcnn on a photograph from matplotlib import pyplot from matplotlib . patches import Rectangle from matplotlib . patches import Circle from mtcnn . mtcnn import MTCNN # describe an image with detected objects def draw_image_with_boxes ( filename , result_list ) : # load the image data = pyplot . imread ( filename ) # plot the image pyplot . imshow ( data ) # get the context for drawing boxes ax = pyplot . gca ( ) # plot each box for result in result_list : # get coordinates x , y , width , height = upshot [ 'box' ] # create the shape rect = Rectangle ( ( x , y ) , width , tiptop , fill = Fake , color = 'red' ) # depict the box ax . add_patch ( rect ) # describe the dots for primal , value in upshot [ 'keypoints' ] . items ( ) : # create and draw dot dot = Circle ( value , radius = ii , color = 'red' ) ax . add_patch ( dot ) # show the plot pyplot . testify ( ) filename = 'test1.jpg' # load image from file pixels = pyplot . imread ( filename ) # create the detector, using default weights detector = MTCNN ( ) # find faces in the paradigm faces = detector . detect_faces ( pixels ) # display faces on the original image draw_image_with_boxes ( filename , faces ) |

The example plots the photograph again with bounding boxes and facial cardinal points.

We can see that eyes, nose, and rima oris are detected well on each confront, although the oral cavity on the correct face could be better detected, with the points looking a lilliputian lower than the corners of the oral fissure.

Higher Students Photo With Bounding Boxes and Facial Keypoints Drawn for Each Detected Face Using MTCNN

Nosotros tin now endeavor face detection on the swim team photograph, e.g. the image test2.jpg.

Running the case, we can see that all thirteen faces were correctly detected and that it looks roughly like all of the facial keypoints are as well correct.

Swim Team Photograph With Bounding Boxes and Facial Keypoints Drawn for Each Detected Confront Using MTCNN

We may want to extract the detected faces and laissez passer them as input to another system.

This can be achieved past extracting the pixel data directly out of the photo; for example:

| # get coordinates x1 , y1 , width , elevation = result [ 'box' ] x2 , y2 = x1 + width , y1 + height # extract face face = data [ y1 : y2 , x1 : x2 ] |

Nosotros tin demonstrate this by extracting each confront and plotting them as separate subplots. You could but as easily salvage them to file. The draw_faces() below extracts and plots each detected face in a photo.

| # depict each face separately def draw_faces ( filename , result_list ) : # load the paradigm data = pyplot . imread ( filename ) # plot each face up as a subplot for i in range ( len ( result_list ) ) : # become coordinates x1 , y1 , width , top = result_list [ i ] [ 'box' ] x2 , y2 = x1 + width , y1 + height # define subplot pyplot . subplot ( ane , len ( result_list ) , i + 1 ) pyplot . centrality ( 'off' ) # plot face pyplot . imshow ( data [ y1 : y2 , x1 : x2 ] ) # show the plot pyplot . evidence ( ) |

The complete case demonstrating this role for the swim squad photo is listed below.

| 1 2 iii four 5 6 seven viii 9 x 11 12 13 14 15 sixteen 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 | # extract and plot each detected face in a photograph from matplotlib import pyplot from matplotlib . patches import Rectangle from matplotlib . patches import Circle from mtcnn . mtcnn import MTCNN # describe each face separately def draw_faces ( filename , result_list ) : # load the epitome data = pyplot . imread ( filename ) # plot each face up as a subplot for i in range ( len ( result_list ) ) : # get coordinates x1 , y1 , width , height = result_list [ i ] [ 'box' ] x2 , y2 = x1 + width , y1 + height # define subplot pyplot . subplot ( 1 , len ( result_list ) , i + one ) pyplot . axis ( 'off' ) # plot face up pyplot . imshow ( data [ y1 : y2 , x1 : x2 ] ) # show the plot pyplot . testify ( ) filename = 'test2.jpg' # load image from file pixels = pyplot . imread ( filename ) # create the detector, using default weights detector = MTCNN ( ) # find faces in the prototype faces = detector . detect_faces ( pixels ) # display faces on the original prototype draw_faces ( filename , faces ) |

Running the example creates a plot that shows each carve up face detected in the photo of the swim team.

Plot of Each Separate Face Detected in a Photograph of a Swim Team

Further Reading

This section provides more resource on the topic if you are looking to go deeper.

Papers

- Face Detection: A Survey, 2001.

- Rapid Object Detection using a Boosted Cascade of Elementary Features, 2001.

- Multi-view Confront Detection Using Deep Convolutional Neural Networks, 2015.

- Joint Confront Detection and Alignment Using Multitask Cascaded Convolutional Networks, 2016.

Books

- Chapter 11 Face Detection, Handbook of Face up Recognition, Second Edition, 2011.

API

- OpenCV Homepage

- OpenCV GitHub Project

- Face Detection using Haar Cascades, OpenCV.

- Cascade Classifier Training, OpenCV.

- Cascade Classifier, OpenCV.

- Official MTCNN Projection

- Python MTCNN Project

- matplotlib.patches.Rectangle API

- matplotlib.patches.Circle API

Articles

- Face detection, Wikipedia.

- Cascading classifiers, Wikipedia.

Summary

In this tutorial, you discovered how to perform face up detection in Python using classical and deep learning models.

Specifically, you learned:

- Confront detection is a computer vision problem for identifying and localizing faces in images.

- Face up detection can be performed using the classical characteristic-based cascade classifier using the OpenCV library.

- State-of-the-art face detection can be achieved using a Multi-task Pour CNN via the MTCNN library.

Do you have whatever questions?

Ask your questions in the comments below and I volition exercise my best to answer.

Source: https://machinelearningmastery.com/how-to-perform-face-detection-with-classical-and-deep-learning-methods-in-python-with-keras/

Posted by: gibbonsnale1948.blogspot.com

0 Response to "How To Draw Bounding Boxes To Classify Man, Woman In Images"

Post a Comment